First impressions

Let’s get this out of the way: Of course I preordered one. I mean, have we met? I like my new and exciting technology. Always have, always will. And the AVP is amazing on paper. The most advanced consumer headset ever, oodles of cameras, gesture tracking, eye gaze tracking, insanely-high-resolution OLED screens.

I was out of town, so I just got to it this morning. These are my first impressions, after using it on and off for a day. There’s plenty of reviews telling you how things work, how you operate the software, etc. Go read them. For a breathless take, read Daring Fireball. For a balanced take, read The Verge’s. Both are engaging and very well written. Instead of an attempt to write a systematic review, these are my impressions.

The hardware

Much has been said about the hardware. It is immaculately built, and it’s solid as if the entire thing was carved from a single block of aluminum. It’s solid like closing the door on a luxury German car. If this had a door, and it could close, it would go ker-chunk.

The box is big. BIG.

When you open it, it’s beautifully displayed.

Then you lift it, and the overwhelming first impression is “wow, this is solid. And heavy. HEAVY.”

Removing the battery from the box also was surprising because of the heft. Again, solid. No question. But HEAVY.

Looking at the device itself, the amount of tech packed in starts to reveal itself. I count at least four cameras per side, plus IR and LIDAR sensors.

The design language itself is strange. It feels, to me, very ‘iPhone 6S meets Airpods Max meets Apple Watch’. The solo loop is a nice fabric. In general, the entire thing oozes quality.

Putting it on, a few things become clear quickly.

- You’ve never worn a VR headset like this. There’s no screen-door effect (the black grid between pixels). Nada. Zilch. None.

- The software is still a work in progress. For example, some windows tend so show up sideways on mine. Some things clip through weirdly. This is fine. As someone who writes much, much, much simpler software, I get it. It’ll get fixed.

- The interaction paradigm is not natural, but it’s easy to learn it.

- Holy cow it’s heavy

The incredibly good

The screens

The screens inside the headset are probably delivered to Apple’s factories by time machine, from a decade in the future. They are almost incomprehensibly detailed. The field of view is decent, but roughly the same as other headsets. You’re looking through a periscope. Part of your world is just missing.

TV

You have NEVER had a TV this good. You have not. I have a wonderful 65″ LG OLED TV. It has superb image quality. It is not as good as the Vision Pro.

I opened the Disney+ app, selected a ‘setting’ (the Disney+ theater) and watched the season finale of Percy Jackson. The theater looks a bit cartoonish, but of course you can look all around, and you’re seated in the best spot in the house. When you hit ‘play’ the screen dims, and you have the show on a giant movie screen right in front of you. It’s like going to a theater, for real, with pretty much identical image quality, but I had my cat on my lap.

It really is that good.

I also watched part of Thor: Love and Thunder in the same theater. It’s available in 3D, and the AVP finally makes 3D movies make sense. It is so natural, and so seamless, that it just works, which is the highest compliment I can think of.

The content-consumption experience is also bolstered by…

The audio

I don’t know how this works. As far as I can tell, it’s black magic. Those little pods on the bands on the sides of the AVP with a black strip pointing towards your ears have drivers woven out of unicorn hair. The sound is so good it’s hard to believe. It’s loud, and full, and richly directional. It does a passable impression of an actual good theater’s sound system, and that’s very high praise.

I don’t know audiophile words. It just sounds so good to me that I wish I could always watch TV or movies at home like this.

It is an open design, so everyone else will hear whatever you’re hearing. If that’s not ok, Airpods are basically required.

Spatiality

Windows stay where you left them, and it’s a pretty solid implementation. They don’t move. You can get very close to them. You can look at them from the side (they’re 2D planes in a 3D world, so they’re just a line from the side) or the back (a white featureless rectangle).

Vector graphics

Finally, in the year 2024, a mainstream UI from a big computer company is rendered completely using resolution-independent vector graphics. It is gorgeous.

Immersive experiences

Select the placid surface of a lake where rain is falling to work in a contemplative setting. The audio sells the illusion of rain perfectly. You can look all around you, and thanks to the screens’ incredible resolution, it’s almost like being there. It’s beautiful.

Any panorama you’ve captured with an iPhone can be projected on a cylinder wrapped around you. Even though they don’t have enough detail and you can see compression artifacts, the result is incredibly immersive. It feels like standing there.

The spatial videos? Like you see on the commercial, where the dad is reliving their child’s birthday party? Other than TV, this might actually be the killer app for the AVP.

Imagine a square mirror, suspended in the air in front of you, its surface impossibly pristine and flat. Inside it, you see a moment from the past, in three dimensions. People laugh, talk, move around. It feels like you opened a window to another time and are peering through it. It should be experienced, with your own recording, to be believed.

I want to capture all important moments as spatial video now. It’s simply that good and, with time, it’ll get better.

The good-ish

Video passthrough

I own a Meta Quest 2. It has a couple of cameras on the outside and it’ll give you a grainy black-and-white view of the world outside the headset if you’re about to crash into something, or if you tap it twice on the side. It’s useful, but terrible and laggy.

The AVP has video passthrough so good people are calling it an ‘Augmented Reality’ headset. It is not. Your eyes are inside a closed dark box, and they get fed the output of a chip that takes images from the outside cameras and shovels them at your eyes as fast as possible.

I was never, for a single second, able to forget I was looking at a screen inside a dark box. You can see the world, and it works. I can read the screen on my phone quite well. I can look at my watch. For some reason, I can’t see the stuff on my computer monitor well. But I can walk around and function normally with the headset on.

You may have seen those videos of people walking on the street, or (dangerously, irresponsibly, idiotically) driving a car with Vision Pro on. Is it doable? Probably. Will you forget it’s not the real world? Not for a moment. Your situational awareness WILL be impaired; much more if you’re watching a video or trying to use Safari.

There’s three issues with the video passthrough. One’s the resolution and dynamic range of the cameras and, to a much lesser extent, the screens. The second is processing latency. The third is Field of View.

The cameras clearly don’t have enough resolution or color accuracy to render the real world in all its glorious detail inside the headset. When you watch professionally-produced content, you can count the eyebrows on the actors’ faces; the screens are That Good. But the cameras are not. The world is rendered ever-so-slightly fuzzy, and the colors are a little wrong. It doesn’t help that I’m in a basement. Light is scarce here. But even if you go outside, with the sun there, it’s not as good as the real thing.

There’s also a barely-perceptible amount of latency. Apple claims 12 ms, less than one 60 Hz frame. But it’s there. Something in your body KNOWS that the image you’re seeing took a more circuitous route than light source – photon – retina. To be clear, I’m not claiming you can see a no-frames delay; that’s ridiculous. What I AM claiming is that the light entering a camera, striking a sensor, being converted to digital data, processed, integrated, and then sent to an OLED display that has to light up pixels, in order to make photons that hit your retina, is slower than skipping the electronics. And it is: about 12ms. And I, at least, seem to perceive it as ‘it is a little bit off’. Not enough to cause nausea. Not enough to be a problem. But enough that it feels slightly, very slightly but noticeably different.

The field of view doesn’t bother me, but its limitation is always there. I guess some people can tune it out. I feel like I’m looking through a periscope. More complicated lenses and larger , probably curved screens will help here in the future. It’s not a big deal, but peripheral awareness is definitely missing, and that breaks immersion. Perhaps even just an extremely low-resolution peripheral screen, or just color LEDs that replicate the main colors in a scene, could help.

Replace a Mac screen

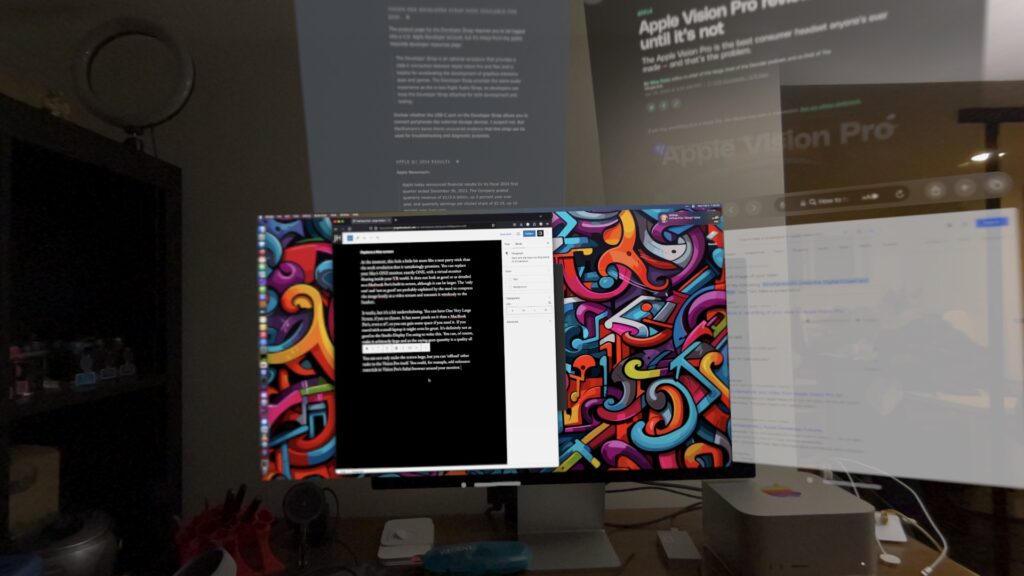

At the moment, this feels a little bit more like a neat party trick than the work revolution that it tantalizingly promises. You can replace your Mac’s ONE monitor, exactly ONE, with a virtual monitor floating inside your VR world. It does not look as good or as detailed as a Macbook Pro’s built-in screen, although it can be larger. The ‘only one’ and ‘not as good’ are probably explained by the need to compress the image lossily as a video stream and transmit it wirelessly to the headset.

It works, but it’s a bit underwhelming. You can have One Very Large Screen, if you so choose. It has more pixels on it than a MacBook Pro’s, even a 16″, so you can gain some space if you need it. If you travel with a small laptop it might even be great. It’s definitely not as good as the Studio Display I’m using to write this. You can, of course, make it arbitrarily large and as the saying goes quantity is a quality all its own.

You can not only make the screen large, but you can ‘offload’ other tasks to the Vision Pro itself. You could, for example, add reference materials in Vision Pro’s Safari browser around your monitor.

In practice, if you have a good, big monitor, you probably don’t want to do this. Not with the current state of the feature. It has promise.

The bad

There’s a few things I don’t like so much about Vision Pro.

One is that it tends to lose track of my hands. If I have them hanging at my sides, as apparently I do, it can’t track them. If they’re in my lap, it’s pretty reliable, even with low light. But I don’t put them naturally where the device wants them, and it’s jarring when I’m tapping uselessly.

Another is text input. I don’t have an extra Bluetooth keyboard at the moment, which you can pair with Vision Pro. If not, your choices are dictating, or the virtual keyboard. For me, dictating is very hit-and-miss. I have an accent; Apple’s devices tend to understand me until they suddenly don’t and they make a mess, and it’s tedious. The worst one by far is using the onscreen keyboard. It’s gaze-and-tap-your-fingers or hunt-and-peck-in-the-air, and I don’t know which of the two interactions is more miserable. For the occasional URL or password it’ll do in a pinch. A sentence or more, please dictate or pair a keyboard. You’ll go insane if you have to type a paragraph with the virtual, floating keyboard.

You can run many, not all, iPad apps inside Vision Pro. The developer has to authorize it. You can use a paired keyboard, if you have one, and a Magic Trackpad, to interact with them like you can on an iPad itself. And I heartily recommend that you do. iPad apps may or may not highlight gaze targets. I imagine it depends on whether they use native controls. In 1Password, at least, you’re always guessing if you’re going to activate the correct entry. The native AVP app pickings are very slim at the moment, and not even Apple has made all the apps they distribute on Vision Pro AVP-native. You get several iPad apps.

You DO get some native productivity mainstays, like Keynote, which I love, and Office 365. You get the Apple TV+ app and Disney+, which work really well. Beyond that… not much more that interests me at the moment.

The ugly

Weight/fit

The first one has to be the weight. Holy Mary Mother of God does this thing have weight, and it is all frontloaded. It does NOT sit comfortably on my face. My face hurts after a while, and so does my head. The bands are TIGHT to keep it in place. That does not spark any joy. I wonder if the spacer and headbands that the Apple Store App recommended are just too small for my giant head. Anyone who’s met me agrees I’m not a “medium.” I can’t buy hats off the rack.

In fact, I hope they are a mistake, because if this is the correct size then this beautiful device is not for me.

The eyes

The EyeSight (ugh) display is just bad. It’s so blurry and dim that it’s impossible to really SEE any eyes in it, and those that you do see look kinda terrible.

I tried looking at other people. Other people tried looking at me. We all agree, it’s capital-B bad. This is a project that was turned in incomplete, because the deadline arrived.

I get what Apple’s trying to do here. I respect the idea of providing a mechanism for interacting with humans while you’re wearing these digital ski goggles. It’s a line in the sand, staking a claim that ‘we do not want to isolate you from everyone else.’ As such, it’s intriguing, and I hope they succeed eventually. This implementation does not accomplish the goal. I don’t think this will be fixable in software, either. The screen’s too dim and low-resolution.

I imagine this will eventually get solved by having transparent goggles/glasses that can dim completely on demand. That would also get rid of all the jumping-through-hoops that video passthrough represents.

My eyes are dry!

My eyes are really dry, and they even hurt. Wearing Vision Pro for more than 30 minutes at a time is basically impossible. I’m taking frequent breaks, but I have known-fussy eyes; I can’t wear contacts, for example. If this continues, I’ll have to return the device because I can’t really use it for real this way.

The price

In terms of tech per dollar, the price is not completely obscene. This thing is a technological miracle, warts and all. I am amazed that it exists, that it can be manufactured at scale, and that I can have one. The promise of Vision Pro is phenomenal. The investment and ambition this headset represent are mind-boggling. Apple tends to stick with their platforms, and I sure hope to see what they have in mind for the next iteration.

But it’s still a lot of money in absolute terms. Unless you’re a bona-fide first adoption enthusiast like yours truly, probably stay away.

Taking it off

My eyes hurt because they’re dry, my face hurts because of the weight, my scalp hurts because of the fit of the headbands (yes, both are bad). Taking the headset off is a relief. The world is more colorful and wider. I don’t look like an absolute tool.

And yet.

Grabbing software and throwing it in the world around you with your hand is how things SHOULD be, something in my brain says. OF COURSE grabbing Safari and putting it -there- and then picking up Word and putting it in front of me, and walking a Notes window to the other end of the room and leaving it there is empowering, exhilarating, and there is an obvious This Is How It Should Be about it that feels, perhaps, as profound as the first time we collectively grabbed a smartphone and realized that having the Internet in the palm of your hand, everywhere, was correct. Never mind that it had some pretty bad knock-on effects; that’s not the point here.

Conclusion, kinda, sorta, after one day

What am I going to do, then, with this expensive, futuristic headset I can’t use that much? I suspect I’m going to go to the actual Apple Store, and see if they have other spacers and headbands to try, because the fit and weight distribution can’t possibly be this bad, or everyone would be complaining about it.

Then I’ll see if my eyes decide that Vision Pro is ok for them. If they don’t, well, I can’t use it.

So there are two roads that end in a return. C’est la vie. I hope I can use a future iteration if this one doesn’t work out.